I am a second-year Ph.D. student in Computer Science at The Ohio State University, advised by Prof. Wei-Lun (Harry) Chao. My research interests broadly lie in reliable machine learning under imperfect data and distribution shift, particularly in real-world settings.

I received my bachelor’s degree in Computer Engineering from the Vietnam National University Ho Chi Minh City, in 2020. From 2022 to 2024, I worked as a research assistant at the VinUni–Illinois Smart Health Center (VISHC) at VinUniversity, and research resident at the FPT Software AI Center, mentored by Prof. Dung D. Le.

Email: nguyen.2959@osu.edu

My research interests center on three directions: (1) learning from imperfect data, (2) detecting the unknown and quantifying uncertainty, and (3) adapting to novel distributions and environments, with applications in medical imaging and animal behavior analysis. Learn more about my research here.

Detecting Out-of-Distribution Objects through Class-Conditioned Inpainting

Quang-Huy Nguyen*, Jin Zhou*, Zhenzhen Liu*, Huyen Bui, Kilian Q. Weinberger, Wei-Lun Chao, Dung D. Le

WACV 2026

We address OOD Object Detection by leveraging the inconsistency between generative and discriminative model outputs. We employ an off-the-shelf generative model as an auxiliary to the object detector and introduce a triplet similarity metric that captures both semantic and visual differences, enabling effective OOD object dection in the zero-shot manner.

Revisiting Semi-Supervised Learning in the Era of Foundation Models

Zheda Mai*, Ping Zhang*, Quang-Huy Nguyen, Wei-Lun Chao

NeurIPS 2025

We present a comprehensive study on Semi-Supervised Learning (SSL) using Vision Foundation Models (VFMs) and propose a simple yet effective baseline that leverages diverse predictions from multiple Parameter-Efficient Fine-Tuning (PEFT) strategies to enhance SSL performance.

Lessons and Insights from a Unifying Study of Parameter-Efficient Fine-Tuning (PEFT) in Visual Recognition

Zheda Mai, Ping Zhang, Cheng-Hao Tu, Hong-You Chen, Quang-Huy Nguyen, Li Zhang, Wei-Lun Chao

CVPR 2025 Highlight (top 2.98%).

We present a unified empirical study of Parameter-Efficient Fine-Tuning (PEFT) methods in visual recognition, offering complementary perspectives to deeply understand their behaviors under different regimes (low-shot, many-shot, domain shift), and highlight their complementary predictions and robustness trade-offs.

Improving Pareto Set Learning for Expensive Multi-objective Optimization via Stein Variational Hypernetworks

Minh-Duc Nguyen, Phuong Mai Dinh, Quang-Huy Nguyen, Long P. Hoang, Dung D. Le

AAAI 2025

We investigate Expensive Multi-Objective Optimization by introducing the Stein Variational Hypernetwork for Pareto Set Learning, which alleviates fragmented and uncertain regions in surrogate models while preserving the diversity of learned solutions, demonstrating strong performance on expensive multi-objective optimization problems.

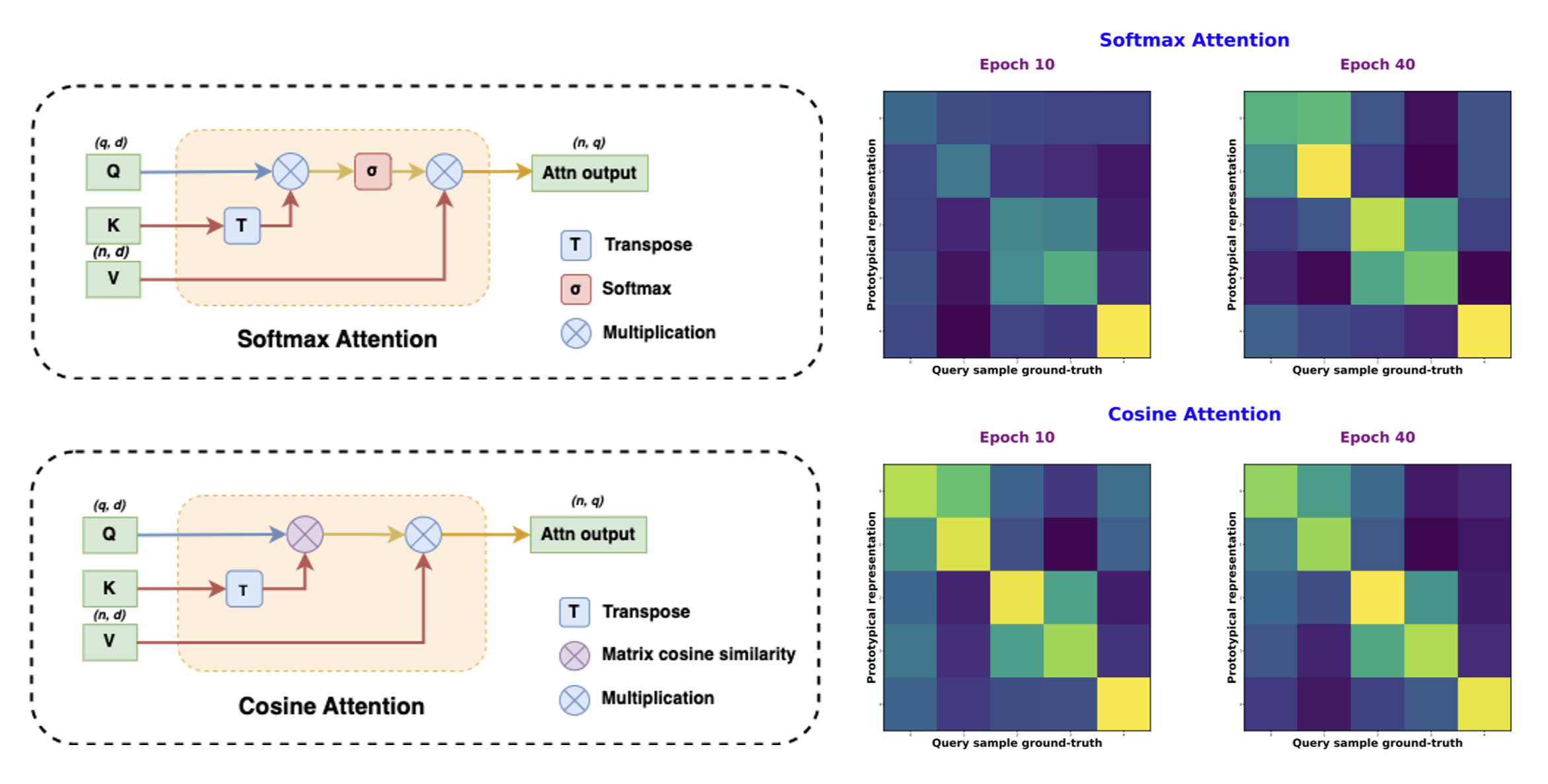

Enhancing Few-shot Image Classification with Cosine Transformer

Quang-Huy Nguyen, Cuong Q. Nguyen, Dung D. Le, Hieu H. Pham

IEEE Access 2023

We explore Few-shot Image Classification by proposing a new cross-attention mechanism based on cosine similarity, without using softmax, to further emphasizes the correlation between labeled supports and unlabeled query representations, thus enhancing ViT-based few-shot algorithms across various settings and scenarios compare to convention attention mechanism.

Nov, 2025: Our paper Detecting Out-of-Distribution Objects through Class-Conditioned Inpainting is accepted at WACV 2026.

Sep, 2025: Our paper Revisiting Semi-Supervised Learning in the Era of Foundation Models is accepted at NeurIPS 2025.

Apr, 2025: Our paper Lessons and Insights from a Unifying Study of Parameter-Efficient Fine-Tuning (PEFT) in Visual Recognition is accepted at CVPR 2025 as Highlight.

Dec, 2024: Our paper Improving Pareto Set Learning for Expensive Multi-objective Optimization via Stein Variational Hypernetworks is accepted at AAAI 2025.

Aug, 2024: I become PhD student in Computer Science and Enginering at the Ohio State University, advised by Prof. Wei-Lun (Harry) Chao.

Aug, 2023: I participate in the 10th Vietname Summer School of Science at International Centre for Interdisciplinary Science and Education, Quy Nhon, Vietnam.

Aug, 2023: I become AI Research Resident at FPTSoftware AI Center, Ho Chi Minh City.

Jul, 2023: Our paper Few-shot Cosine Transformer is accepted at IEEE Access.

Feb, 2023: I become Research Assistant at College of Engineering and Computer Science, VinUniversity.

Jan, 2022: I become Research Assistant at VinUni-Illinois Smart Health Center (VISHC), VinUniversity.

Dec, 2020: I obtain a Bachelor degree in Computer Engineering at University of Information Technology, VNU-HCM.

Jul, 2019: I become Research Assistant at Faculty of Computer Engineering, University of Information Technology, VNU-HCM.